The security landscape has changed rapidly. We have seen how failures in software security can lead to serious consequences. Software development is no longer just about writing code that works. As software increasingly controls physical devices, critical services, and business operations, expectations toward developers continue to grow. It is no longer just about whether software works, but how reliably, securely, and predictably it behaves.

Software developers have always been expected to deliver working code. Today, they are also expected to ensure reliability, security, and the ability to justify their solutions from a security perspective.

“High-quality code and a functioning result have always been expected, but today the importance of quality and verification is understood differently,” says Mika Maunumaa, Senior Software Engineer.

The change is visible in how quality, security, and verification are treated. They can no longer be compromised in the same way as before. New code is introduced only after extensive testing that meets predefined criteria. Testing must cover functionality, security, and the impact on other systems.

Marko Koivuporras, Senior Software Developer, describes the change from a practical perspective:

“In the past, it was enough to have an editor and a compiler. Today, developers must consider security requirements in the development environment, programming language, and the project itself. The working environment has expanded significantly, but in addition to that, developers are expected to be able to prevent, detect, and fix vulnerabilities in code.”

Software development is no longer about implementing individual components. It is about building systems where individual decisions affect the entire system—often in unexpected ways.

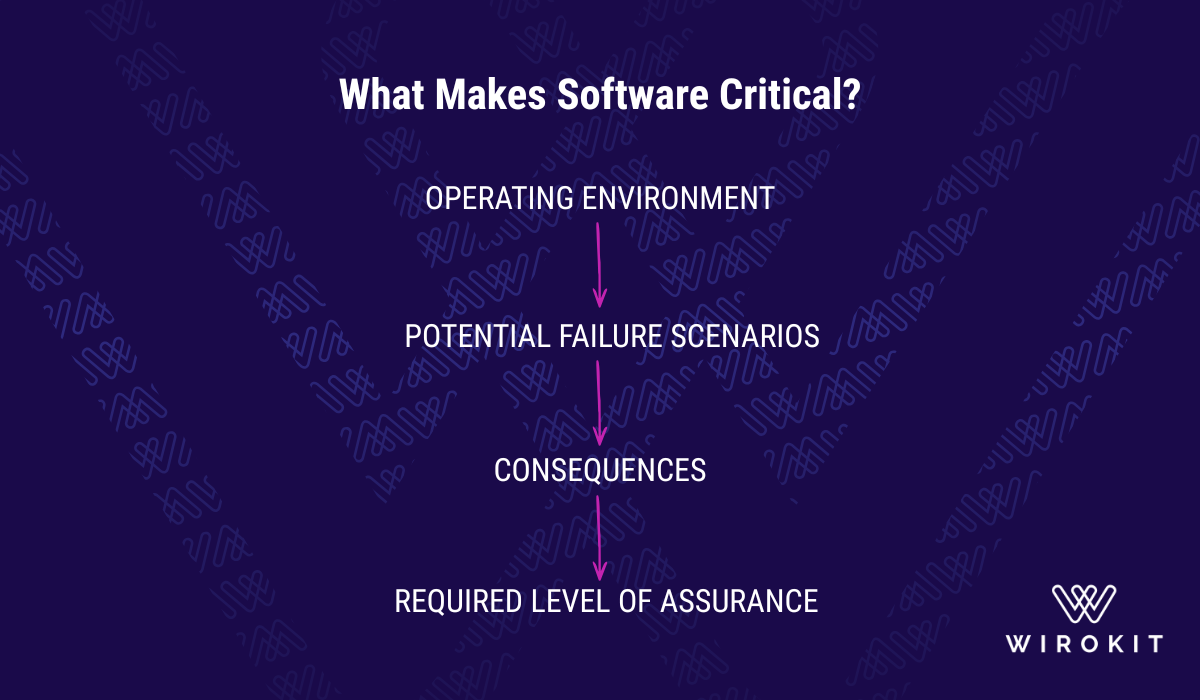

Criticality is often discussed, but rarely defined in practical terms.

“Money and human life. Those ultimately define criticality,” Maunumaa summarizes.

Koivuporras adds:

“In addition to safety and health, criticality is directly linked to business impact and the handling of personal data. This is where the level of requirements increases significantly.”

Criticality is not merely a technical classification, but is determined by risk—by the likelihood of failures and the impact they may cause. In practice, this comes down to what happens when software fails.

When software is part of a system where failure can interrupt production, compromise safety, or violate regulatory requirements, the nature of software development changes.

The security landscape has changed rapidly. However, in many organizations, criticality is still recognized too late. Software is developed for a long time as if it were not critical, even when its operating environment clearly makes it so.

When this is finally recognized, organizations often find themselves in a situation where the development approach must be corrected mid-way. This becomes costly—or in some cases, no longer feasible.

“Secure development reduces risks, supports business continuity, and improves overall quality.”

In critical software development, functionality alone is not a sufficient measure. What matters is whether the system can be trusted—and whether that trust can be justified.

“It is impossible to fully verify that software works correctly,” Maunumaa says.

“There is no amount of testing that can prove the absence of defects. That is why reliability is not based on perfection, but on how systematically it is built and verified.”

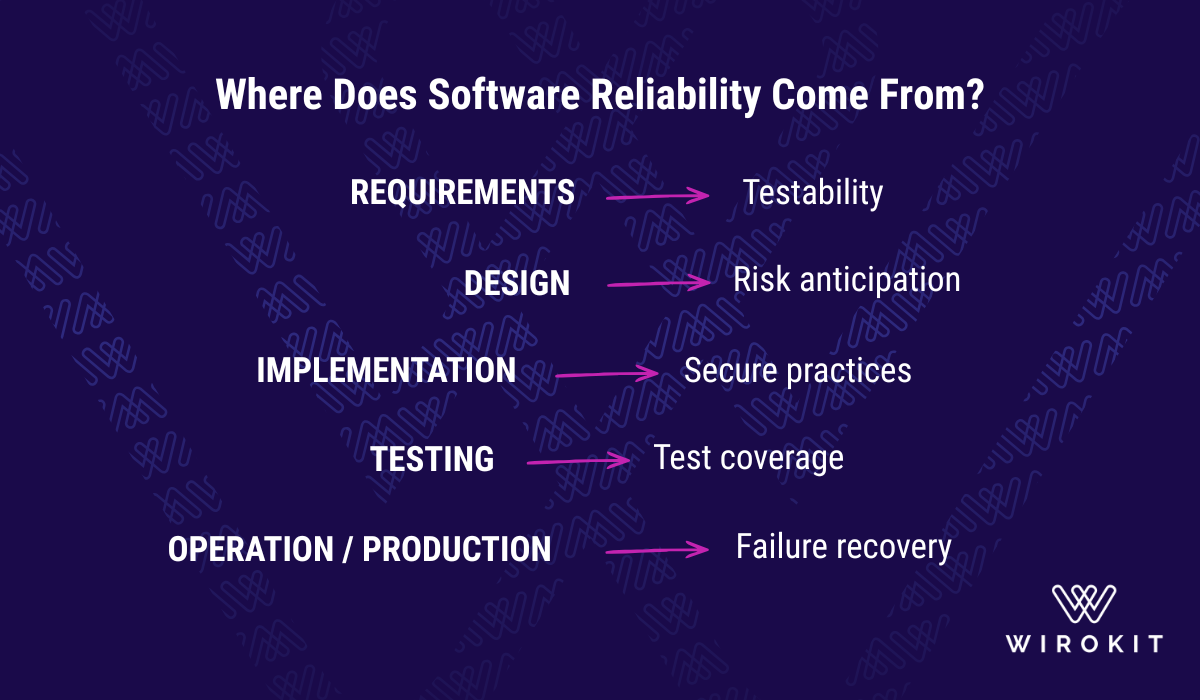

If requirements are not defined in a way that makes them testable, their fulfillment cannot be verified. In such cases, quality remains an assumption: the software appears to work, but there is no justified evidence behind it.

Reliability does not come from individual tests or checks. It is the result of a development process in which requirements, design, implementation, and testing all consistently support it. Without this, reliability remains an assumption, and its true cost becomes visible only in production.

One of the most persistent misconceptions in software development is that experience shows in writing better code. In reality, it shows in what is deliberately left out.

“A junior developer hears a requirement and starts coding a solution immediately,” Maunumaa explains.

An experienced developer takes a different approach. They identify risks in advance and understand when a simple solution is not sufficient—and when it should not be chosen.

Koivuporras describes this shift:

“It’s no longer about surviving the act of coding. The focus has moved to the system level and architecture.”

In critical environments, this means a shift in mindset. Developers do not base their solutions on assumptions, but prepare for the system to encounter failures.

Preventive actions—such as testing, threat modeling, audits, and secure development and maintenance—are always more cost-effective than managing incidents under pressure.

“It’s about identifying where things can go wrong and making sure they are addressed in advance,” Koivuporras summarizes.

Many organizations assume that quality and security are addressed during development. In practice, the most significant problems arise before development even begins.

“Before a project even starts, it is already underestimated,” Maunumaa states.

The issue is rarely a single implementation error. More often, the starting point is weak. Requirements are not defined in a testable way, estimates are overly optimistic, and the role of testing remains unclear.

The consequences only become visible later, when it is no longer possible to properly verify the finished system.

In practice, this is reflected in simple but critical principles:

“You can never trust user input—it must always be validated.”

This is not about individual practices, but about how software development is approached from the very beginning. It requires developers to have a comprehensive understanding of security and the ability to operate in environments shaped by security requirements and regulations.

When software operates as part of a physical system, error handling is fundamentally different from traditional application development.

“You can’t reboot it like a laptop—it has to recover from failures on its own,” Koivuporras explains.

This observation changes the nature of development. It is not enough for software to function under normal conditions—it must also withstand disruptions and recover from them in a controlled way.

In physical environments, systems must be able to detect failures, limit their impact, and continue operating without external intervention. Reliability means the ability to function even when things do not go as planned.

This is a key difference compared to earlier approaches that focused solely on delivering functionality. In reliable software development, failure is not an exception—it is an assumption that must be addressed.

Both Maunumaa and Koivuporras agree on one thing: software development is not becoming simpler.

“Security, testing, and requirements management are becoming increasingly important, but they are still not fully understood,” Maunumaa says.

At the same time, AI is changing development work. It can accelerate code production, but it does not remove responsibility—in fact, it increases it.

“If more code is produced faster, the importance of testing and verification increases. With AI, large volumes of code can be generated quickly, but their correctness cannot be assumed. This makes verification even more critical,” Koivuporras states.

This creates a tension that many organizations have not yet resolved. Development is expected to be faster, while requirements for reliability continue to grow.

Today’s software developers do not just build functionality. They build systems that must endure time, usage, and inevitable failures.

This fundamentally changes the nature of the work. Developers must understand how systems behave as a whole—not just how individual features perform.

In critical environments, software is not released once. It is continuously updated. It evolves with changes, security updates, and new requirements. At the same time, it must withstand failures, external disruptions, and situations that cannot be fully anticipated.

This means that security, quality, and reliability cannot be added afterward. They are the result of decisions made throughout design, implementation, and the entire development lifecycle.

Functionality alone is not enough. What matters is whether the system behaves in a way that is understandable, predictable, and justifiable—so that it can be trusted even in exceptional situations.

Software security is part of societal resilience. Software developers are not just building systems—they are contributing to a more reliable society.

Without clear practices, this often leads to a situation where speed increases, but confidence in the system decreases. Secure development requires clearly defined rules that guide development work and are consistently followed.

In modern software development, simply writing functional code is no longer sufficient; cybersecurity and reliability have become essential components of a developer's professional expertise. As software increasingly governs critical physical infrastructure and core business processes, the consequences of failure can be devastating from both a financial and safety perspective. Criticality is not merely a technical classification but is determined by the operating environment and the potential real-world impact of errors. Consequently, security requirements and systematic verification must be integrated into the development process from the very first definitions to avoid costly and complex fixes later in the project.

The role of an experienced developer has shifted from pure coding toward architectural oversight and risk anticipation. Systems must be designed for autonomous recovery, and as AI-driven development increases the sheer volume of code, the importance of rigorous testing and quality assurance is more vital than ever. Reliability is not the result of a single test but must be substantiated by systematic practices throughout the entire development lifecycle. Ultimately, this is about strengthening societal security and resilience, with software developers serving as the primary architects of more robust and predictable systems.